...Explore our more blogs

AI Model Risk 101: The File is the Attack Surface

Enterprise teams carefully vet traditional software packages, yet AI models downloaded from public repositories are often treated as simple data files, even though many formats can execute code when loaded. This creates a significant and underrecognized supply-chain risk, where a malicious model file can silently compromise systems the moment it is opened.

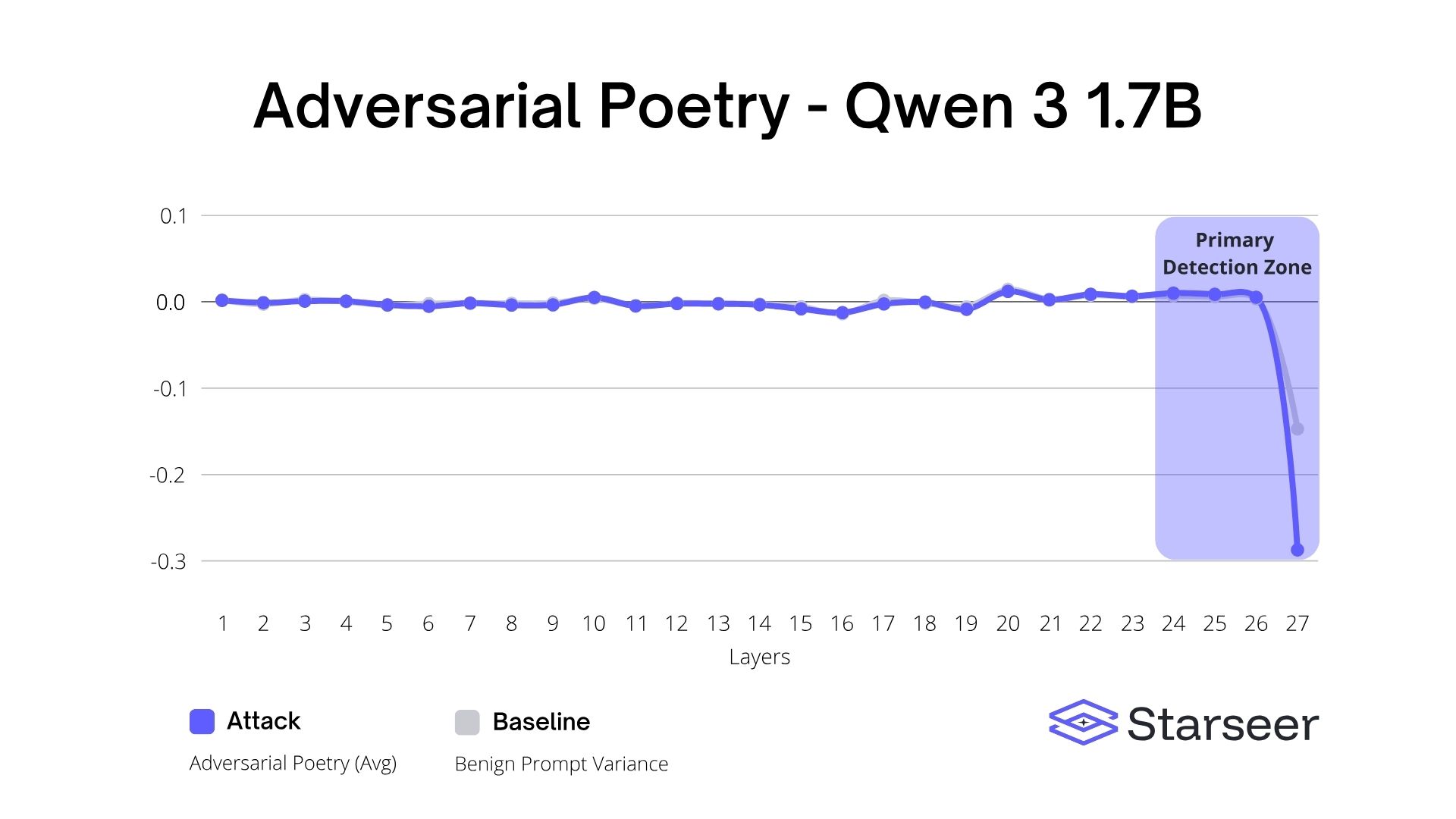

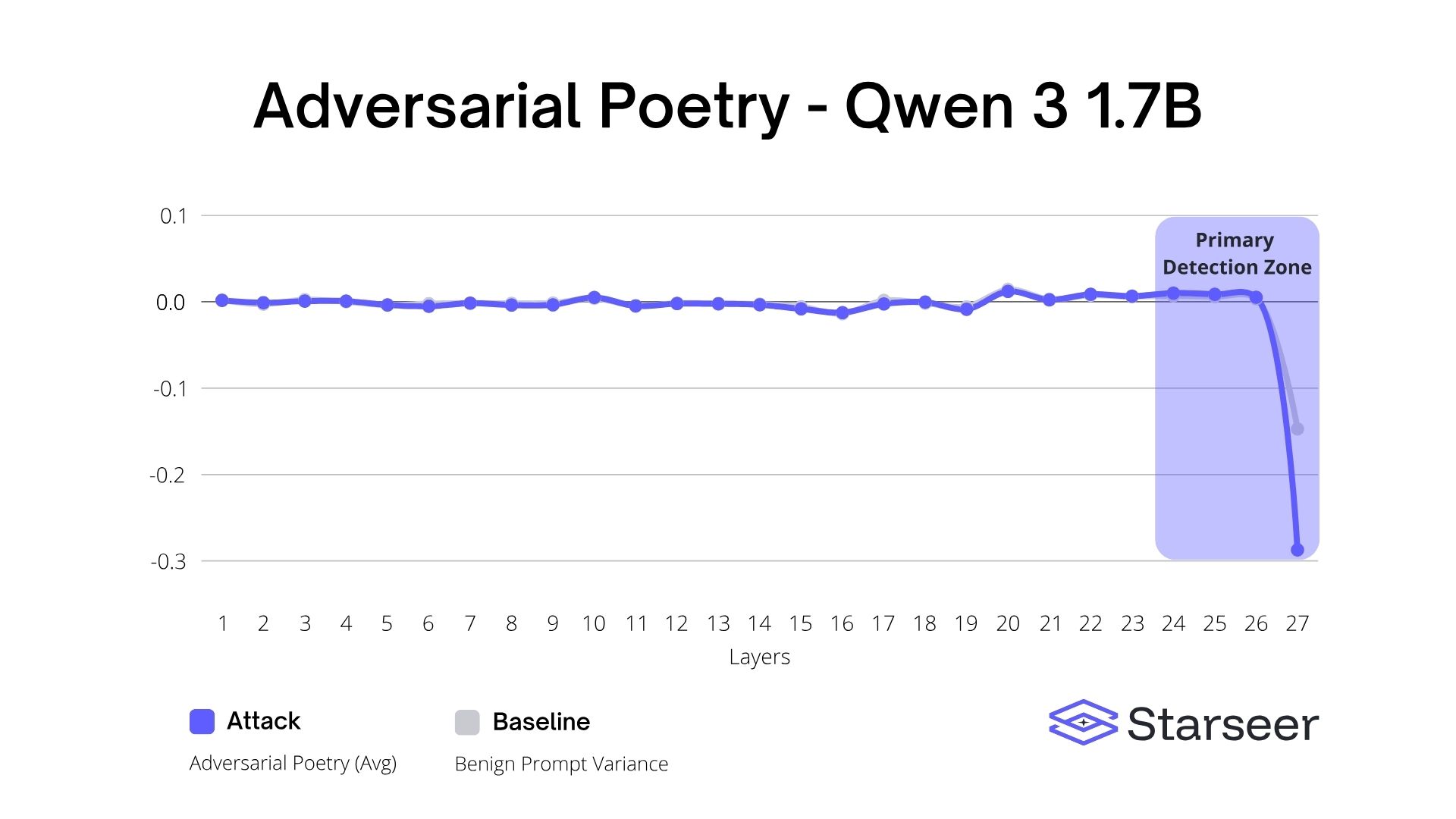

When "Too Powerful to Release" Meets "Too Deep to Hide": Deconstructing Adversarial Poetry with Layer-Wise Analysis

A recent “adversarial poetry” jailbreak claimed to be too dangerous to release. Using Starseer’s interpretability-based analysis, we reconstructed similar prompts, tested them across Llama, Qwen, and Phi models, and uncovered consistent, model-internal anomaly signatures that make detection possible—even without knowing the original attack prompts.

AI Model Risk 101: The File is the Attack Surface

Enterprise teams carefully vet traditional software packages, yet AI models downloaded from public repositories are often treated as simple data files, even though many formats can execute code when loaded. This creates a significant and underrecognized supply-chain risk, where a malicious model file can silently compromise systems the moment it is opened.

When "Too Powerful to Release" Meets "Too Deep to Hide": Deconstructing Adversarial Poetry with Layer-Wise Analysis

A recent “adversarial poetry” jailbreak claimed to be too dangerous to release. Using Starseer’s interpretability-based analysis, we reconstructed similar prompts, tested them across Llama, Qwen, and Phi models, and uncovered consistent, model-internal anomaly signatures that make detection possible—even without knowing the original attack prompts.

Take control of your

AI model & agent.

From industrial systems to robotics to drones, ensure your AI acts safely, predictably, and at full speed.

%20(Landscape)).avif)